Apple’s M1 is Going to Change EVERYTHING (The Industry Will ‘x86it’)

x86 is Getting 187ed as The Industry x86its

Anyone who can look at charts and read reviews is finding it hard to argue, at this point, that Apple’s M1 is not a game-changer. For the people that have had the good fortune to actually be able to actually use one of these machines, well, they are (mostly) completely blown away.

Mark my words: The rest of the industry will follow this exit from the x86 instruction set. An ‘x86it,’ if you will. It’s becoming clear to OEMs that if they are going to stand a chance at competing with Apple, they are going to have to make some drastic changes.

What Makes the M1 so Fast?

There are several factors involved in the M1’s high level of performance. The chip has a usually large amount of instruction decoders and OoOE Out-of-Order Execution hardware. But there is something a little more important that I would like to talk about.

Apple is doing something really interesting with the M1. They are adding (a lot of) specialized hardware to work alongside the main general-purpose number-crunching parts of the M1.

Rather than stuffing a large amount of general-purpose hardware into the M1, Apple has instead opted to add more hardware-acceleration for specific tasks.

Apple has designed quite a substantial GPU into their shiny new chip.

It’s important to note that GPUs (Graphics Processing Units) are about more than graphics these days. They are also used for many other tasks that require massive parallelism.

They’ve also fitted the M1 with a 16-core ‘Neural Engine,’ which is hardware allocated specifically for the purpose of accelerating machine learning applications.

For good measure, they’ve thrown in some beefy DSPs (Digital Signal Processors) and dedicated Video encoding circuitry.

What is an Accelerator?

A hardware accelerator is a processor that is designed for a specific purpose. Instead of performing actions in software by a general-purpose CPU, a hardware accelerator, such as a GPU, performs its tasks in hardware on specially designed circuitry.

Anything an accelerator can do, a general-purpose processor can also do, just (usually) a whole lot slower. The thing is, what requires the repetition of many operations for a CPU to perform a particular task, a specialized piece of accelerator silicon can do in just a single step.

The Processor/Accelerator Data Bus

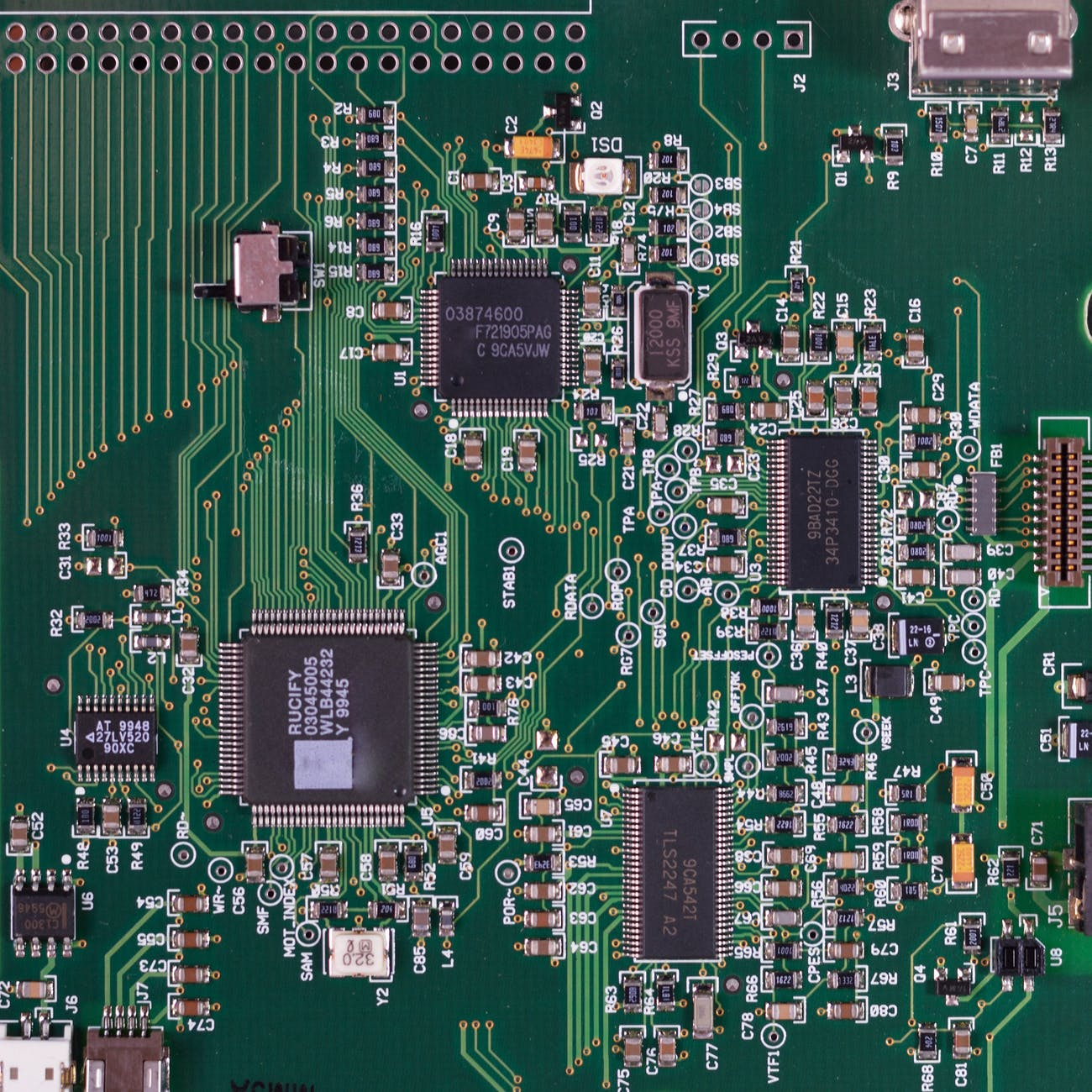

Data that is stored as numbers that are represented as pulses of current are pushed through etched conductive pathways (tiny wires) to reach different areas of the chip.

To perform a mathematical operation on a number, we have to put it somewhere. So, we store them in ‘registers’ A decoder is used to create a connection between the registers and memory.

The Decoder uses an LSU (Load Store Unit) which causes a memory address to be transmitted to the address bus. Then, the memory prepares to receive the address.

After that, the memory hardware with the correct address is selected. Its content is then transmitted to the data bus.

Peripherals like the keyboard and mouse, and accelerators such as FPU (Floating Point Units) GPUs, ‘Neural Engines’, and other accelerators are accessed in the same way as memory locations. storage drives, network controllers, input devices, GPUs, accelerators have memory addresses that are mapped to them.

So, all of the processors, have addresses that the CPU can communicate with. The CPU can arrange all kinds of instructions for other processors, such as the M1’s Neural Engine.

So, machine learning tasks can be sent to the M1’s Neural Engine to, for example, recognize speech. Sure. Any general-purpose CPU can do that, but the M1 has dedicated AI hardware to perform those kinds of calculations. So, the rest of the computer is free to do other things while the dedicated Neural Engine is chomping away on all that voice data.

A Need For Parallelism

It has become clear over the last decade that a CPU clock frequency, at least a CPU made of silicon, isn’t going to move much past 5 GHz. It’s not too much higher than that where heat output and energy consumption get out of hand. Basically, at least currently, humanity has hit its limits on just how well it can increase clock speed.

Humans are, however, really good at something else. Adding more transistors. Although this practice has slowed down a bit since Gordan Moore first laid out his expectations, we have been able to fit impressive numbers of transistors on chips. So, if we are having trouble making our number-crunchers crunch numbers faster, then we need to focus on how we can make them crunch more numbers at the same time.

Related Post: A Quick Look at The Apple M1